We are interested in analysis (including semantic level) and interpretation of visual digital contents, such as images and videos. We are also interested in including the associated textual and commonsense knowledge to enhance the analaysis and interpretation of such contents.

Our current work and interest include (but not limited to):

- Video matching and similarity detection.

- Semantic Video Annotation.

Visual Contents Analysis

In general, We are interested in Scene classification, motion analysis, object detection, classification, and tracking, among other main tasks under investigation.

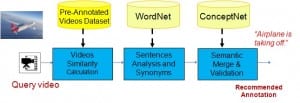

We have been investigating novel approaches to incorporate knowledge and spatio-temporal information to interpret the scene and detect visual events from wide-domain uncontrolled videos. (see Figure below) [Projects….] [Publications….]

Applications targeted include video segmentation, indexing, abstraction/skimming, classification/categorisation, scene understanding and event detection, semantic information extraction, and semantic-based video search and retrieval, to name just a few.

Textual Contents Analysis

Textual contents are also under investigation. In particular, we are interested in semantic analyses of blogs contents and social networks to extract implicit features, emotions, personality, and stylistic attributes of authors/users.

We have been analyzing the patterns of users in blogosphere, detecting the identity, and inferring the groups and communities through applying text mining and adapting psycholinguistic properties (see figure below). This aims to identify authors of new post/comment and may be used to match friends. It can also be useful in security and forensic applications.

We are interested in extending this to dialogues in the future, as well as other languages (such as Arabic). [Projects….] [Publications….]

Comments are closed.